Email marketing A/B and multivariate testing made simple

Imagine you’re trying to bake the perfect cookie. You might experiment with different amounts of sugar, types of flour, or baking times until you have the most mouth-watering, goey-centered, crispy-edged cookie you’ve ever had.

That’s what A/B and multivariate email testing do for your email marketing strategy. Just like finding the right cookie recipe, these testing methods help you identify which email elements work well together and resonate best with your audience, improving engagement and deliverability.

It’s all about optimization—fine-tuning each component to ensure your emails are as irresistible as those perfect cookies. Let’s dive in and explore how you can use these techniques to sweeten your email game!

What is A/B testing in email marketing?

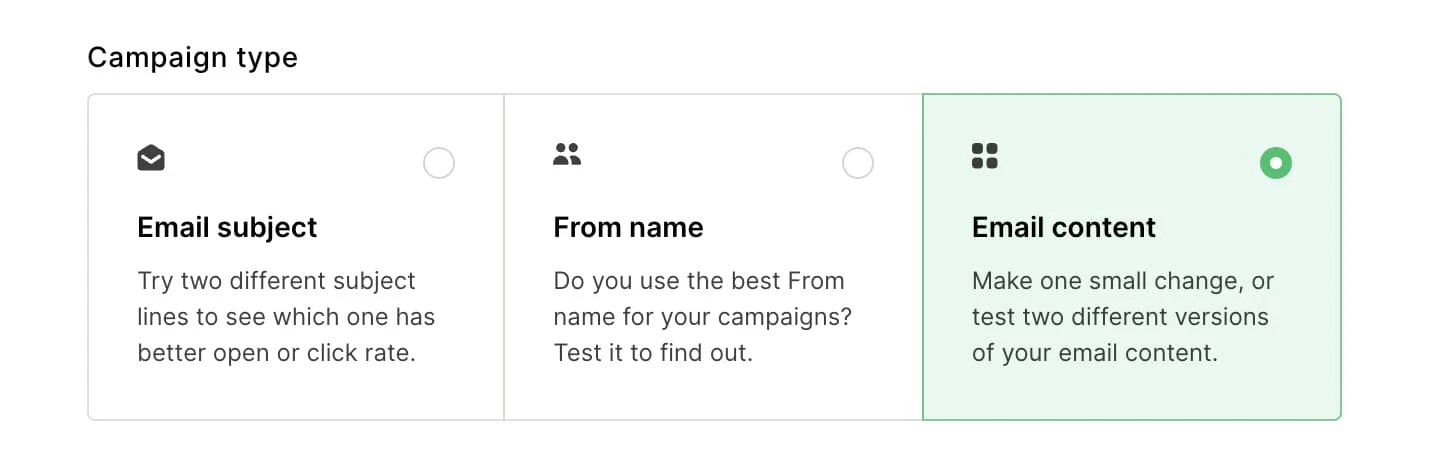

Email A/B split testing is a method where you send two versions of an email to different segments of your audience to see which one performs better. You change one variable (like the subject line, email content, or call to action) between the two versions to identify which version gets better results, such as more opens or clicks.

This helps you understand your audience and improve your future email campaigns.

So… what’s multivariate testing?

Multivariate testing in email marketing is like A/B testing, but instead of changing just one thing, you change multiple variables at once. For example, you might test different combinations of subject lines, images, and call-to-action buttons all at the same time.

This helps you see how different elements work together and find the best combination to improve your email campaign's performance. It's a more complex way to understand what your audience likes best.

The difference between A/B testing and multivariate testing is that in A/B testing you change one variable. With multivariate testing, you can change multiple, which generally requires a larger sample size.

3 things to consider before testing

Before you jump right in, there are a few things you need to consider, like which email variables to test and why. Let’s break down some of the key things you should have nailed down before you start running your A/B or multivariate test.

1. Choosing the right variables

When you’re starting out, it’s important not to overwhelm yourself with testing too many variables at once. Here’s how you can decide which ones to focus on:

Identify your goals: Think about what you want to achieve with your email campaign. Are you trying to increase open rates, click-through rates, conversions, or something else? Your goals will guide you in choosing which variables to test.

Prioritize impact: Some elements are more likely to have a significant impact on your results. For example, subject lines can greatly affect open rates, while CTA buttons might influence click-through rates. Start with the variables that you think will have the biggest effect.

Look at past data: If you’ve sent emails before, check out what’s worked well or not so well in the past. This can give you clues about which areas might need improvement and are worth testing.

Start simple: Begin with one or two variables. For example, you could test different subject lines and sender names first. Once you get the hang of it and see some results, you can move on to more complex tests.

2. Sample size

The number of people you send your test emails to (your sample size) is crucial for getting reliable results. Here’s what to keep in mind:

Ensure enough recipients: To get statistically significant results, you need to have enough recipients in each group you’re testing. If your list is too small, the results might not be reliable. There are online calculators, like the Evan Miller calculator, that can help you figure out the right sample size based on your list size and the confidence level you want.

Split evenly: When you’re conducting an A/B test, split your list evenly between the different versions you’re testing. This helps ensure that the comparison is fair. If your email list is big enough (1,000+) we recommend sticking to the 80/20 rule (also known as the Pareto principle). This involves sending Version A to 10%, Version B to 10%, and the winning version to 80% of your target audience.

Consistency: If you’re testing multiple variables, make sure each test has a consistent sample size. For example, don’t test one subject line on 100 people and another on 500 people.

3. Analyzing results

Okay, yes, this technically happens after you run your first test. But it’s nice to know what to expect so you’re not just left with a pile of data you don’t know what to do with. Once your test is complete, it’ll be time to analyze the results:

Look at key metrics: Depending on your goals, focus on the metrics that matter most, such as open rates, click-through rates, and conversion rates

Statistical significance: Ensure that the differences in your results are statistically significant and not just due to random chance. Again, online tools can help with this

Iterate and learn: Use the insights from your tests to refine your emails. Testing is an ongoing process, so keep experimenting with different elements to continually improve your results

8 multivariate and a/b testing ideas

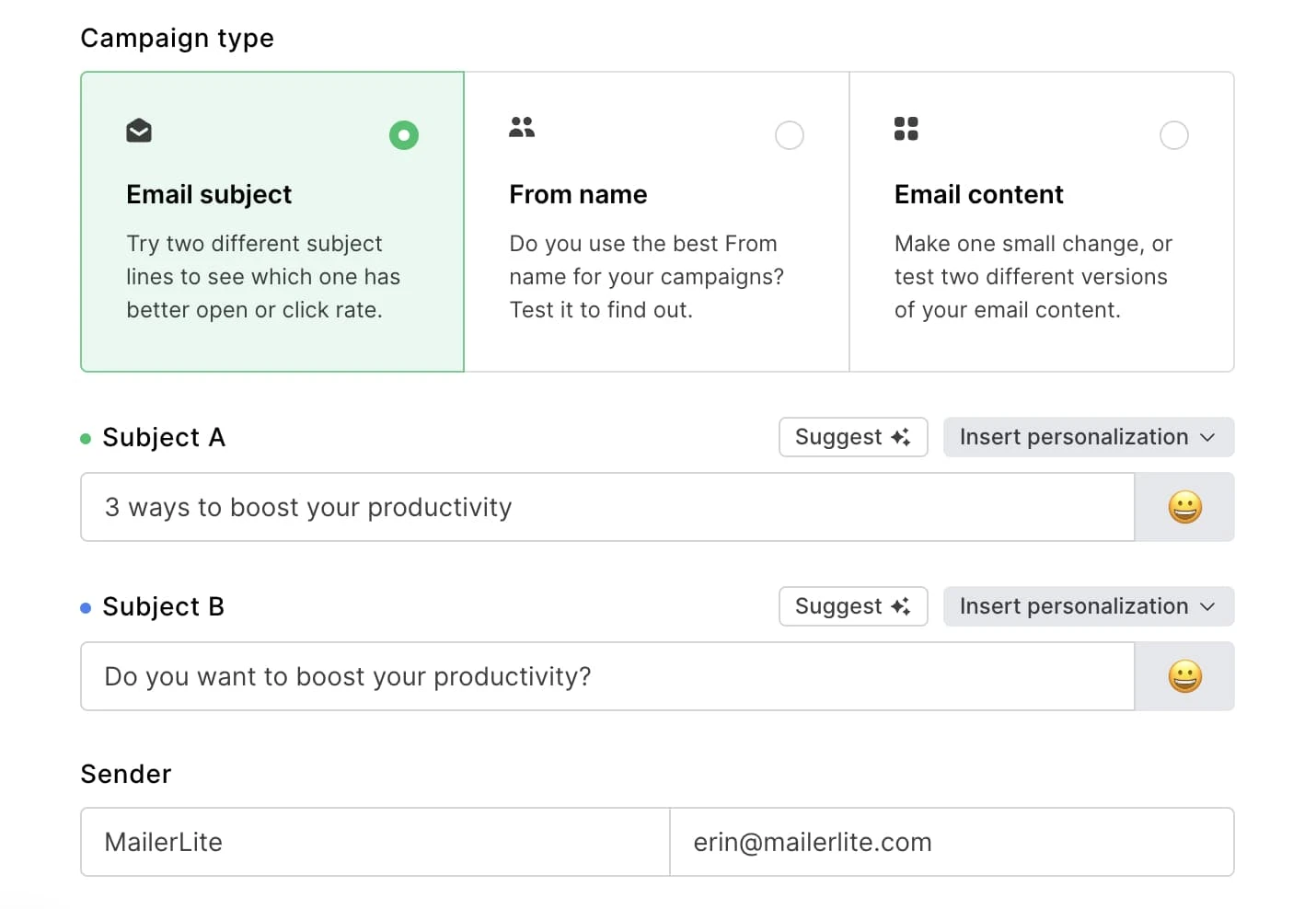

1. Email subject line

An effective subject line can make all the difference in a successful campaign. Testing subject lines is a good way to start optimizing your emails. MailerLite makes it easy to test subject lines with a simple click.

When you are on the A/B or multivariate test page, select Email subject and type in the 2 subject lines you want to test.

Not sure what subject lines to test? Use the AI subject line generator to generate multiple ideas in seconds. Or try testing some of the approaches below.

Version A: 3 ways to boost your productivity

Version B: Are you using these 3 tricks to boost productivity?

Version A: 20% off sitewide, this week only!

Version B: Janet, we’re giving you 20% off this week!

Version A: Lucky you, take 10% off for St Patrick’s Day

Version B: Lucky you, take 10% off for St Patrick’s Day ☘️

Version A: Get your free sample box today

Version B: Last chance to get your free sample box!

Need help setting up your A/B test? Watch this step-by-step tutorial video to show you the way:

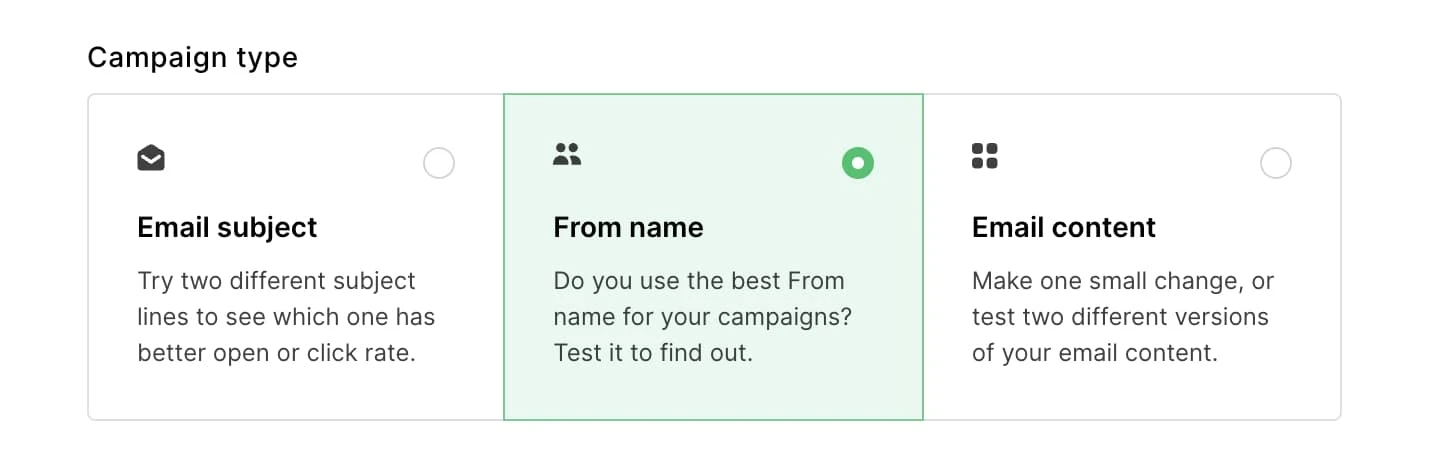

2. Test your sender name

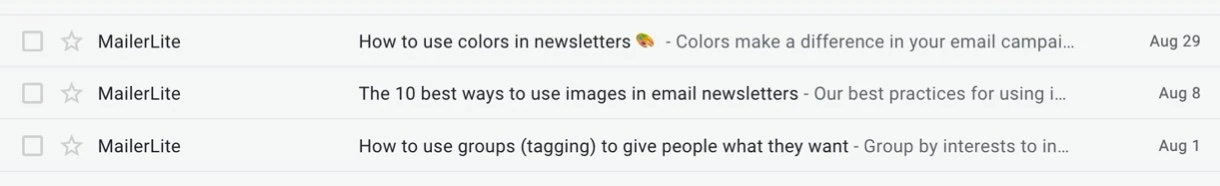

The ‘From’ field can be even more important than your subject line. If you have a personal connection with your audience, they will open your email regardless of the subject line.

Case in point: you wouldn’t care what the subject line is when you’re getting an email from your mom. We usually open and read emails that are sent by someone we trust and know.

Here are some ways you can change the ‘From’ field and test it.

If your campaign comes from a company, experiment with using your company name versus inserting the name of one of your employees (e.g. the marketing person or CEO/founder).

Version A: MailerLite

Version B: Ilma from MailerLite

If you’re a blogger and your name is your brand, you can test whether just your first name or your entire name works best.

Version A: Seth

Version B: Seth Godin

If you’re sending emails from info at yourcompany.com, you can test whether readers are more willing to open your emails when it’s sent from an actual person working at your firm.

Version A: info@yourbusiness.com

Version B: dave@yourbusiness.com

3. Test email content

Testing the content of your newsletter can be tricky because if you’re not consciously choosing your variables, it’s hard to identify what causes a conversion. Here are a few different aspects of your email content that can be reliably tested:

Call-to-action (CTA): Test the placement, wording, frequency, or button color of your main CTA

Postscript: See if adding your main CTA to the P.S. leads to more conversions

Images: Test between 2 different visuals, GIFs vs. static images, or see if your readers find no images at all more engaging

Body text: Test the length, tone or style of your email copy. Does a friendly approach work better, or something more educational?

Colors and fonts: See if your subscribers prefer something brighter or something more neutral

5. Test your preheader text

When customers receive your email, the subject and preheader text will be the key elements they use to determine if opening and interacting with your email is worth their time.

The preheader text is like a continuation of a subject line, so you can test it in the same way as a subject line: ask a question, create a sense of urgency, and so on.

You can implement multivariate and A/B tests into your automation workflows. Whether you just want to optimize your welcome email or test every message in a complex onboarding sequence, A/B testing for automation will help you fine-tune your email automations and clear a path to email marketing success.

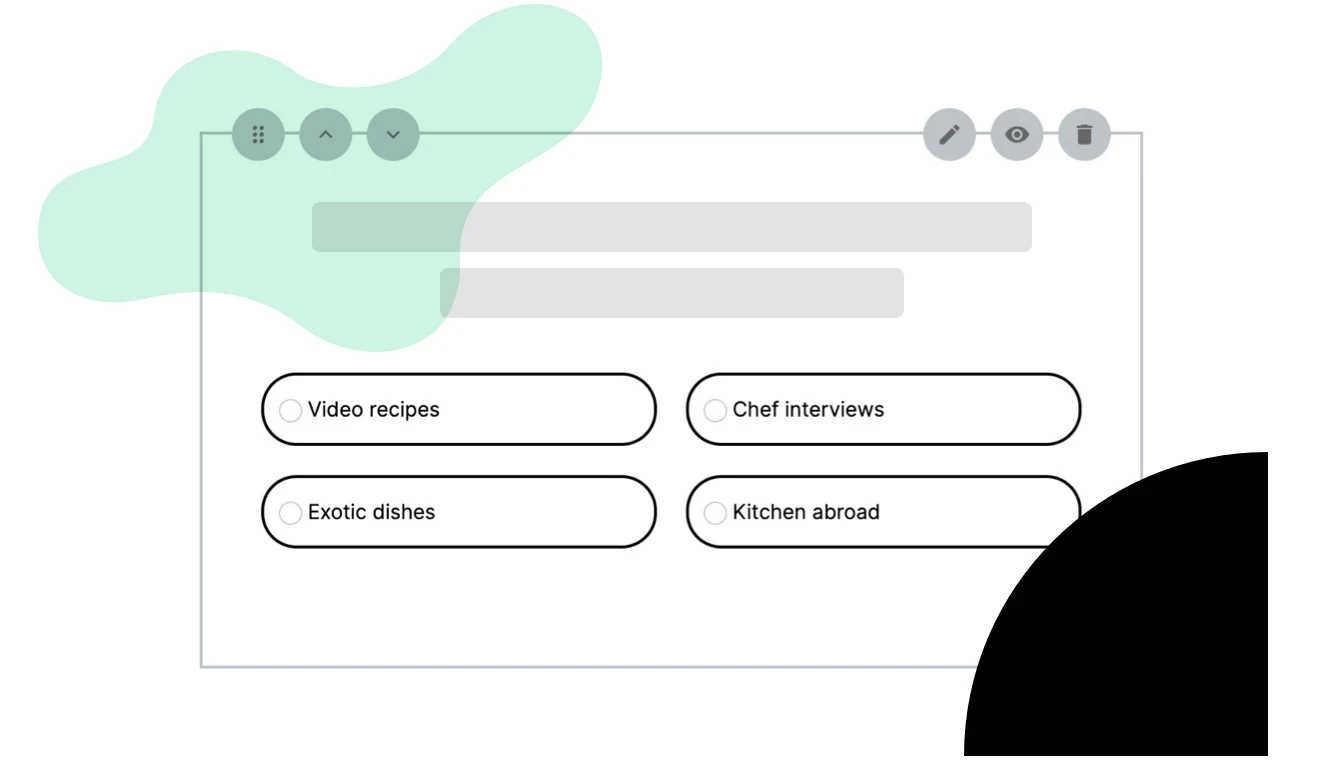

6. Interactive content

See whether or not your subscribers want to get involved in your newsletter with interactive content! Test whether or not a survey or quiz will lead to more engagement.

7. Offers and incentives

Go further than testing just your email marketing campaigns—see which offers lead subscribers to click more. Some ways you could test your offers and incentives include:

Percentage discount vs. fixed amount: See what kind of offer is more appealing to subscribers

Incentive placement: Placement can impact engagement. Test your offer above and below the fold

Countdown timers: Do your subscribers act fast or shy away from urgency?

8. Footer content

Don’t neglect this ever-overlooked section of your newsletter, it can be just as make-or-break as your headline. Variables to look at in your footer include:

Number and type of social media icons: Too many can be overwhelming, while too few might result in missed opportunities for engagement

Placement of social media icons: Testing where they’re most effective

Length and placement of disclaimers: Ensuring they’re there without being too intrusive

A/B testing for landing pages

The fun doesn’t stop with emails! Did you know that you can also A/B test your landing pages with MailerLite? Just like with your emails, you can test different versions of your landing pages to find out which one is most effective and make some tweaks accordingly.

You can test lots of different elements in your landing pages, including:

Product descriptions: Find out what resonates most with your page visitors

CTA: Test elements like the color scheme, positioning, sizing and copy

Headline and copy: Learn what messaging triggers the most engagement

Images and videos: Check whether your page visitors prefer minimalist pages, or louder ones with lots of graphics and videos

With MailerLite, you can test up to 5 different content combinations by splitting traffic between the landing pages, and see the results in your dashboard. So actually, it’s really more of an A,B,C,D,E test!

Why you should start A/B testing your emails today

As you’ve learned, there is nothing mysterious or complicated about A/B testing. In fact, email marketing is much harder without A/B testing. Your campaigns will not improve without learning what works and what doesn’t.

With MailerLite, it’s super easy to set up an A/B test. Start small and try testing your subject line to improve your open rates. Once you get the hang of it, you can go on and try one of the A/B tests above.

The only challenging thing about A/B testing is that you’re never really done. There is no end to what can be tested and what knowledge can be gleaned from testing. If something works well in a few A/B split tests—keep doing it, and move on to test another aspect of your email. Also remember, what works today will not necessarily work tomorrow.

Ready to have fun? Start A/B testing today to improve your email and landing page conversions tomorrow.