A/B testing email marketing examples to improve campaigns, landing pages, and more

Tania and Keren, Customer support team.

Tania and Keren, Customer support team.

Who says experiments are just for zany lab-coated scientists? The art of A/B testing allows you to test different versions of your email marketing content and see which one creates a bigger reaction.

Stuck between red or yellow banners? Run an A/B test and let the data decide. Emoji in the subject line, or go old school with just text? Put both under the microscope and see which gets the most eyeballs!

There are so many testing possibilities, it can be hard to know where to start. We compiled these A/B testing email marketing examples to help you! So, let's strap on our thinking caps, dive into the world of A/B testing, explore some practical case studies, and ignite the spark for you to run your own experiments.

What A/B testing is, and why you’ll love it

A/B testing, also known as A/B split testing, is the method of comparing two different versions of a variable (such as web pages, app and emails) to see which one performs better using a sample of your audience.

You don’t have to read your audience’s minds to get results. With A/B testing, you can make data-driven decisions about your digital marketing campaigns.

There’s a whole heap of things you can test, including:

You can test text and copy, color designs, layouts, subject lines and more to find what catches your audience’s attention. Let’s jump into some real-life examples. If you'd like a more in-depth explanation of A/B testing, take a look at our ultimate guide below.

5 A/B testing email marketing examples to improve metrics

When it comes to A/B split testing your email marketing campaigns, there are a lot of variables you can choose from. You might want to test what kind of subject line is more appealing to your subscribers, maybe you want to test 2 different templates, or perhaps you want to know whether your readers prefer plain text over colorful HTML emails.

Whatever you decide to test, just remember to test one variable at a time. That way you can properly measure how it affects your campaign. Here are a couple of tests we’ve run in our very own campaigns at MailerLite.

For emails, MailerLite's A/B testing feature compares two versions and sends them to a chosen sample size, usually 20% of the email list. The ‘winner’ is automatically chosen after a defined time frame (but you can also select the victorious email manually if you prefer).

Side note: Please remember that every A/B test is specific to the audiences and goals of the test. These examples are meant to inspire your own tests. The results are not best practices.

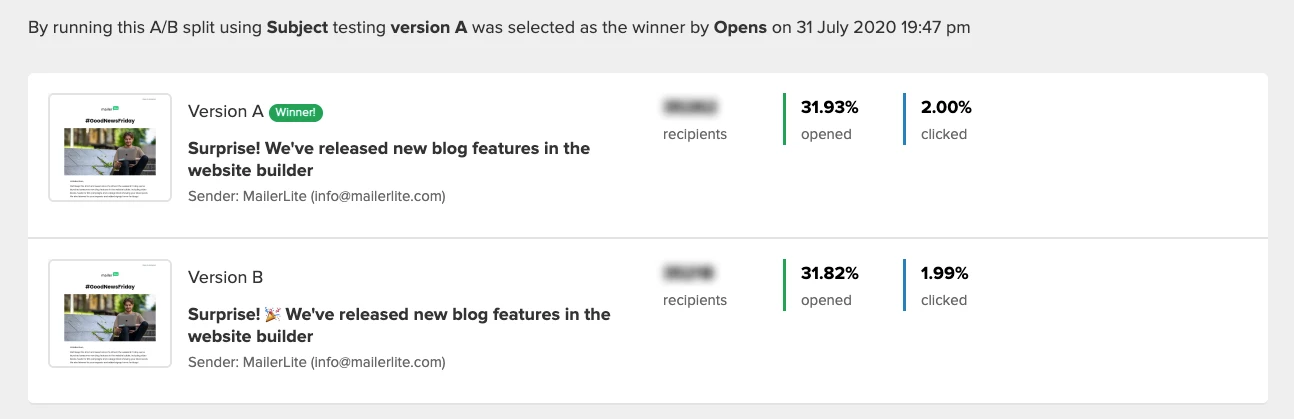

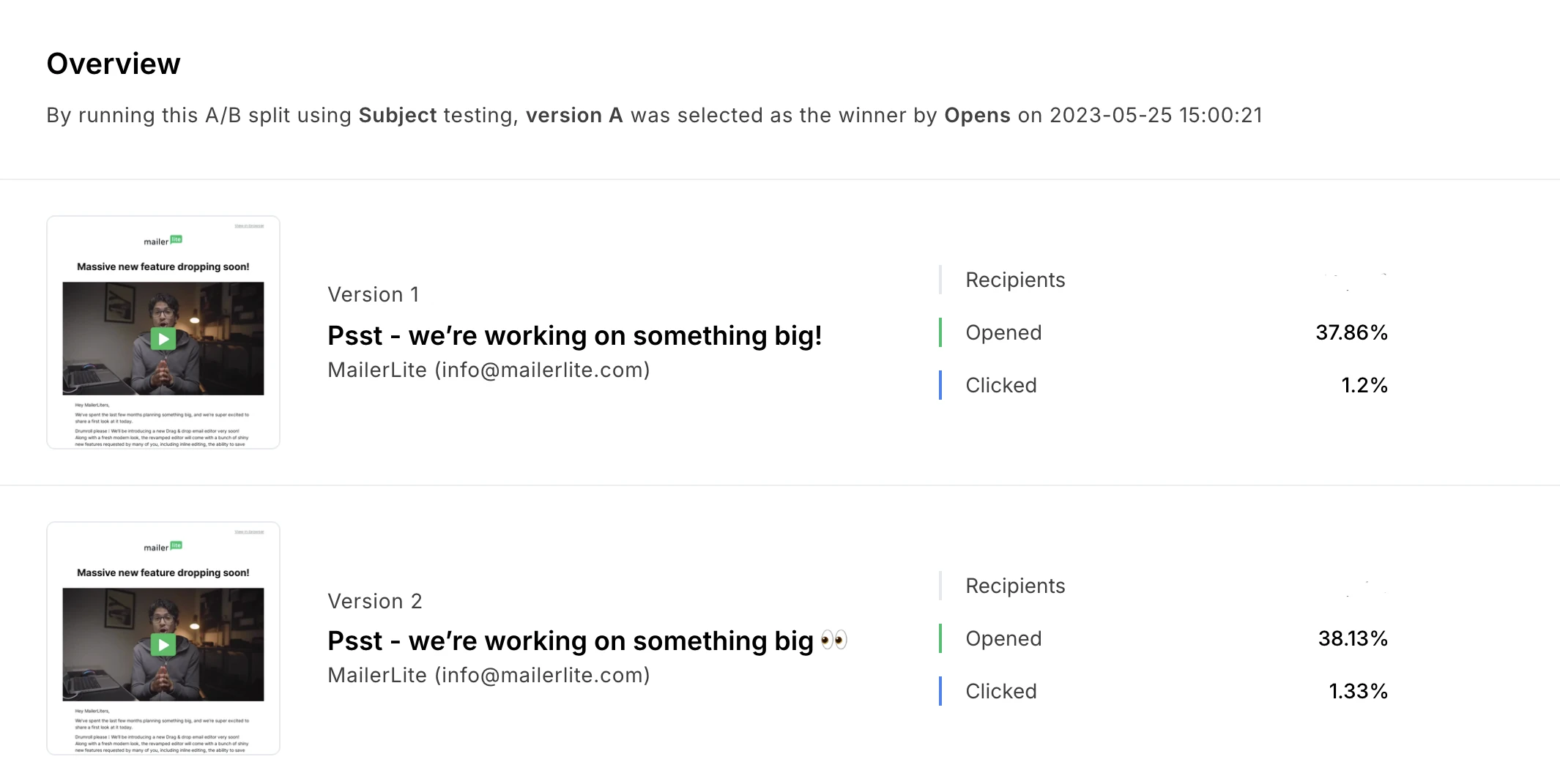

1. Emojis vs. no emojis in subject lines

Emojis in email subject lines can be a sticky topic. Some people avoid them at all costs, while others think they’re the best thing since sliced bread! We fall into the latter category, but we weren’t sure whether our subscribers felt the same way.

When we first started testing emojis in our subject lines, our subscribers weren’t super into it. But for the last couple of years, our A/B split tests for emojis in the subject line have consistently shown that emojis are incredibly effective—for our audience. Try it for yourself to see if it works for yours.

Emojis in 2020

Emojis in 2025

Don’t take our results as gospel though. Every audience is different, and open rates will also depend on factors such as the subject line copy and length.

If in doubt, trial the same subject line with and without an emoji, and repeat this A/B test until you see a clear pattern.

For the most accurate results, allow at least 1-2 hours of testing before selecting a winner. You also need to choose a sending window with the best chances of higher open rates, both during and after the test.

For example, our 2025 data shows that open rates peak between 8 AM and 11 AM, so you could send out the test at 9 AM for 1 hour, and then send the winning version at 10 AM to make the most of this window.

Use the right A/B testing tool

Did you know that you can run A/B tests with MailerLite? It lets you split-test your email campaigns, landing pages and more, to find the most effective version!

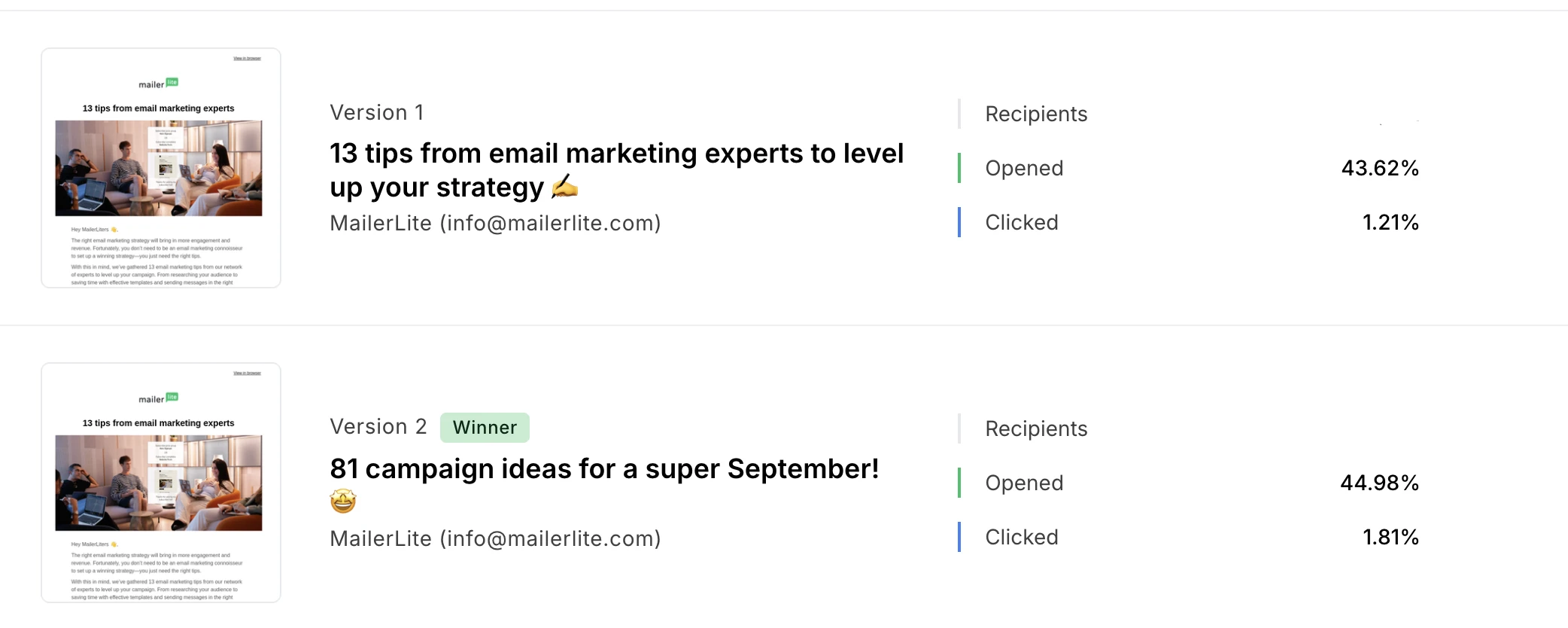

2. Longer vs. shorter subject lines

Another variable for testing subject lines is the subject length. Do subscribers need more information and some marketing jazz, or does clear and concise do the job? Well, we put that to the test.

As it turns out, quick and concise reigns supreme when it comes to MailerLite subscribers! This time, we set the A/B test metrics to select the winner by clicks, rather than opens, due to changes to Apple Mail Privacy Protection. But this metric also helped us to see who was motivated by the subject line to actually click the link to the article.

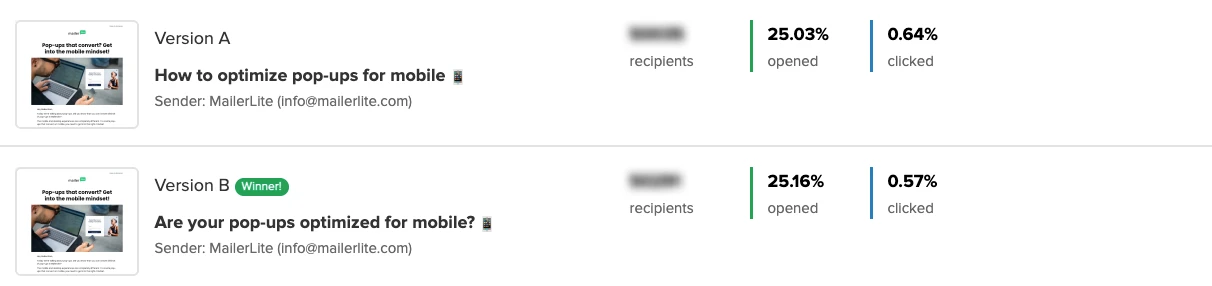

3. Starting the subject line with a question

Subject lines are just a smattering of words, but they have a big impact on email engagement, so we wanted to get it right. But what types of wording are most effective?

One idea we tried was opening with a question to make it feel more personal to the reader.

For example, when sharing about responsive pop-up designs, we compared "How to optimize pop-ups for mobile📱" with "Are your pop-ups optimized for mobile?📱"

In this test, the question version got the highest open rate. This is a super easy and rewarding variable you can test in your own email marketing strategy today!

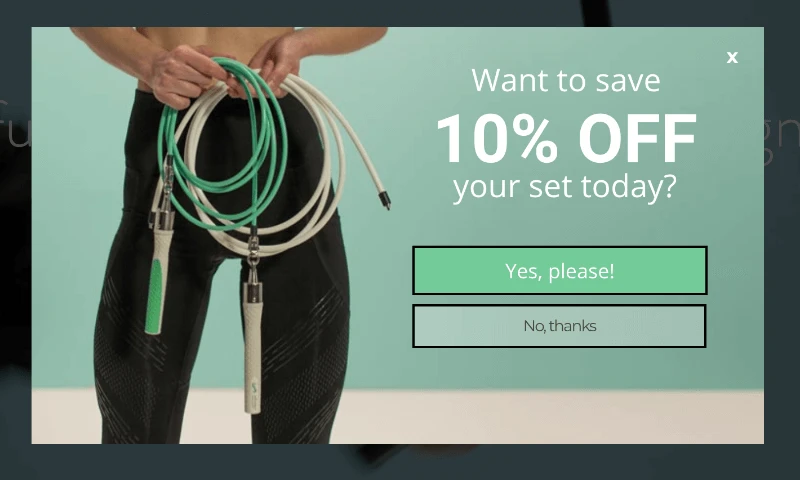

4. A/B testing GIFs and Images

We can all agree that images, GIFs and visuals in general are an important design element of any newsletter. But does their positioning in the email influence conversions?

We wanted to find out, so we A/B tested whether images and GIFs at the beginning of the email could increase click rates.

GIFs in email

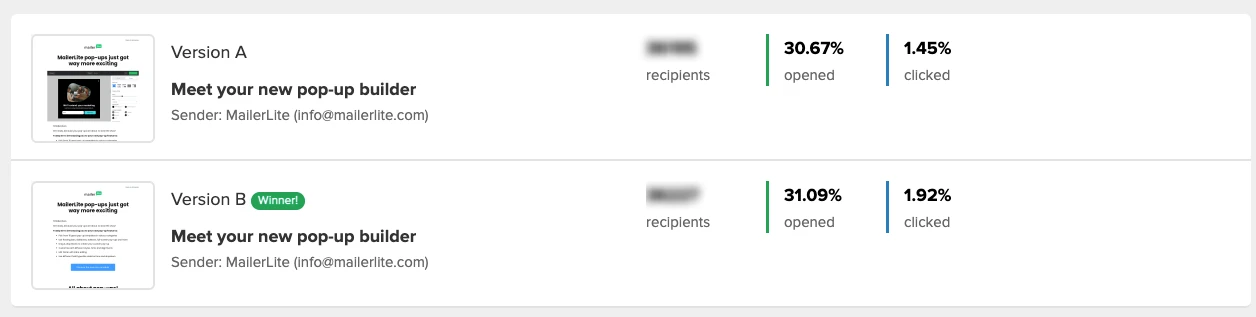

Email GIFs are a dynamic way to catch people’s attention, so naturally, we inserted one at the beginning of our campaign to promote our new pop-up builder! But we were also intrigued to see how much of a difference it would make, so we created a test variation without the GIF at the beginning.

Take a good look at both emails below and place your bets—which version got the highest clicks?

To our surprise, the email without the GIF at the beginning of the email got much higher click rates.

This made us wonder if we should be including graphics at the beginning of the newsletter at all, or whether a plain text email was the way forward. We decided to test it again with an image at the beginning of the email.

Images in email

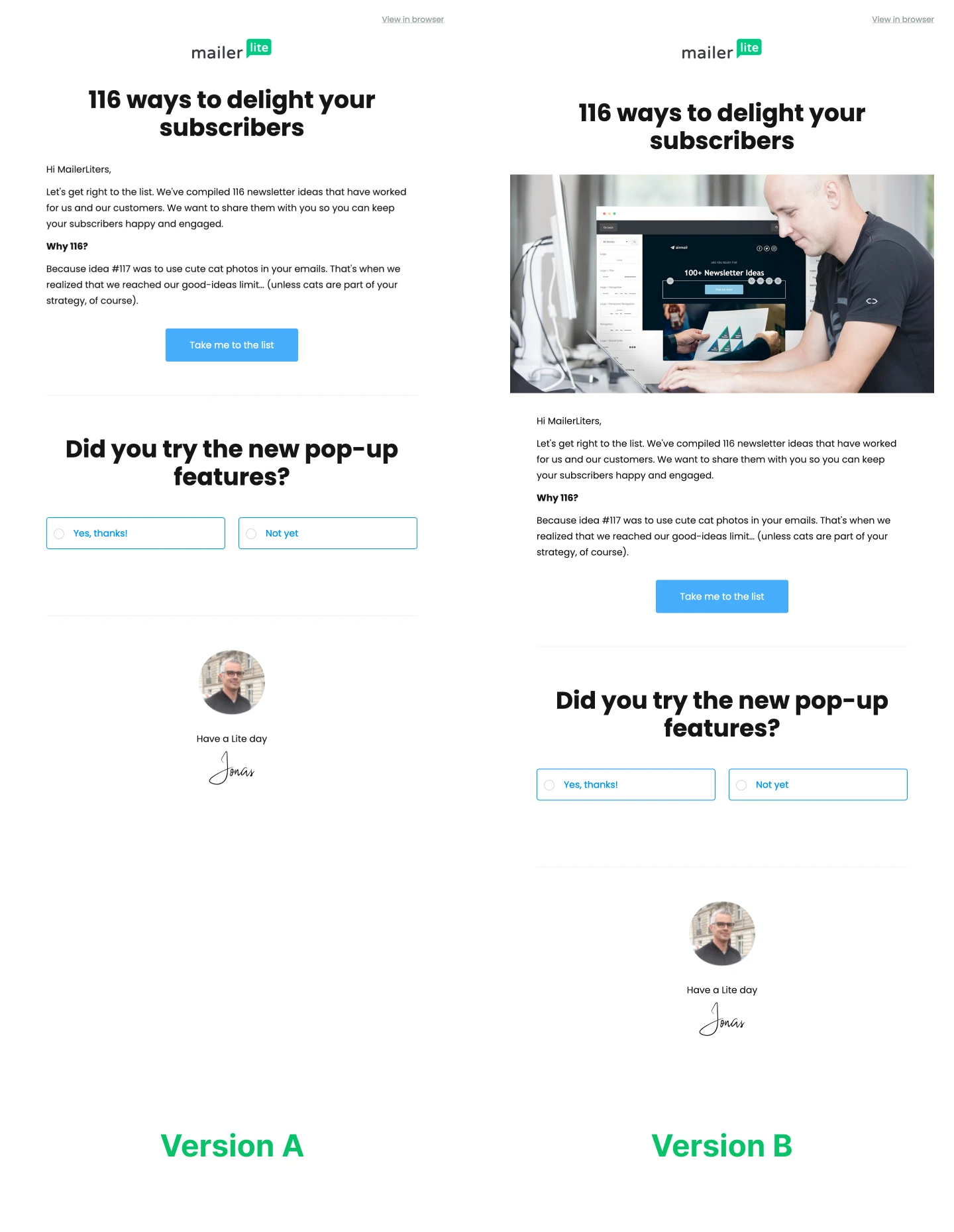

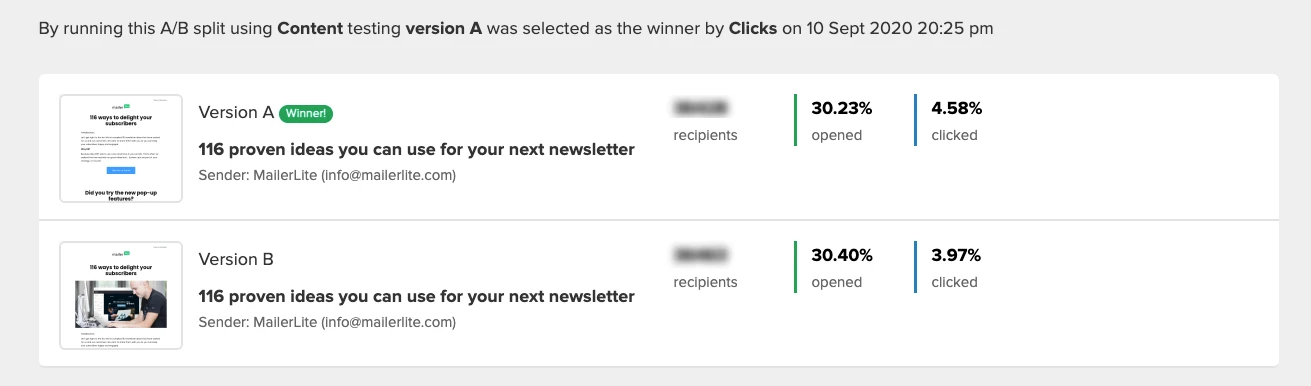

This time, we were promoting an article on 116 newsletter ideas. We created one plain text version of the email, and another with a cover image from the article.

You know the drill—take a look and see if you can guess which version won the day…

The email without the image at the beginning had much higher click rates!

Why did the campaigns without GIFs and images at the beginning have higher click rates?

There are a couple of possible reasons for this:

First off, bright images and dancing GIFs could be a distraction, stopping people from scrolling down and converting.

If the graphics take more time to load, readers might not get to the content quickly enough to convert.

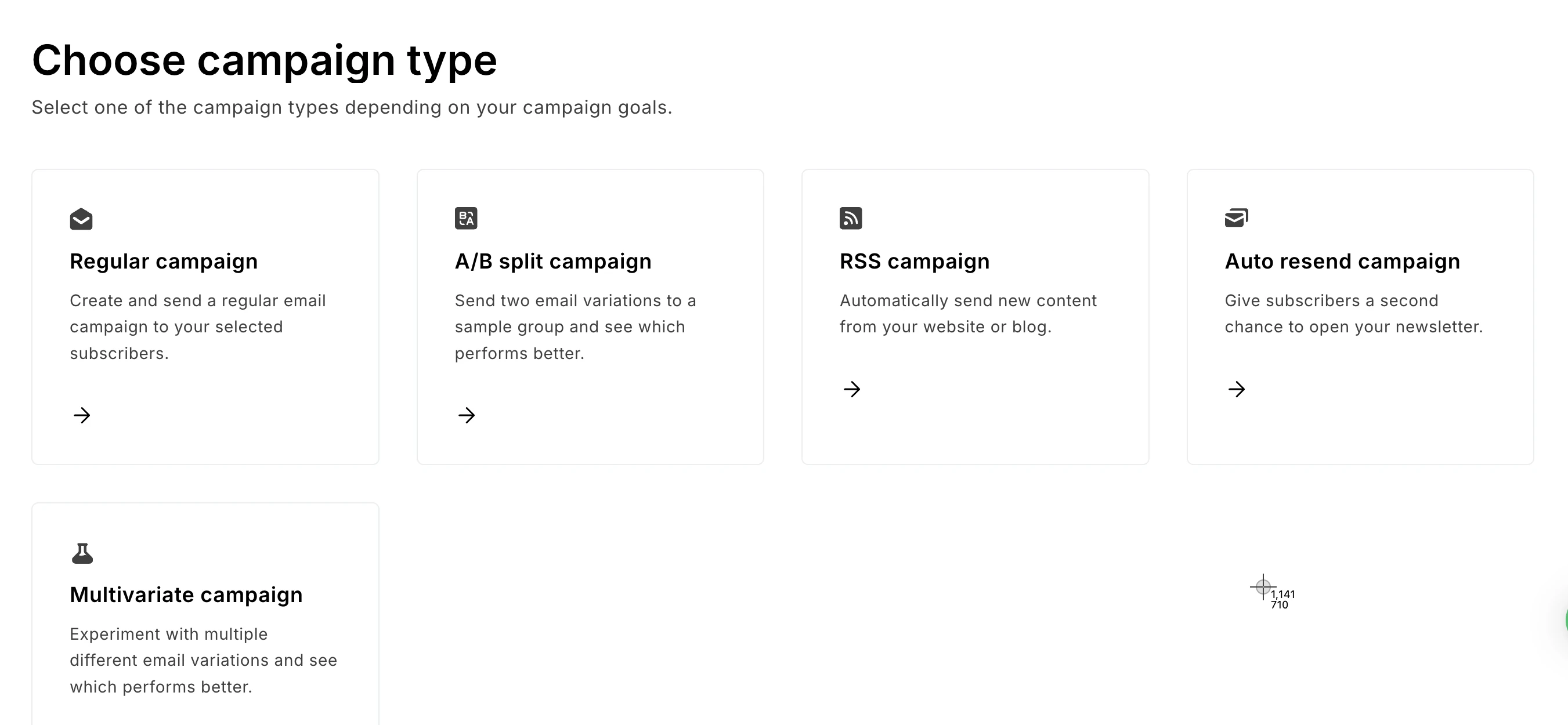

How to send an A/B split campaign in MailerLite

With MailerLite, you can send up to 5 versions of your email with differences in the following:

Email subject

Email content

Sending time

From name

To start a campaign, select Campaigns on your dashboard and then click on Create campaign.

After that, choose A/B split campaign.

After creating your campaign versions, you can select the size of your test group, how long the test will run for, and whether the winner will be selected by opens or clicks.

For a step-by-step guide on setting up an email A/B test, check out the video tutorial below.

5. A/B testing automations

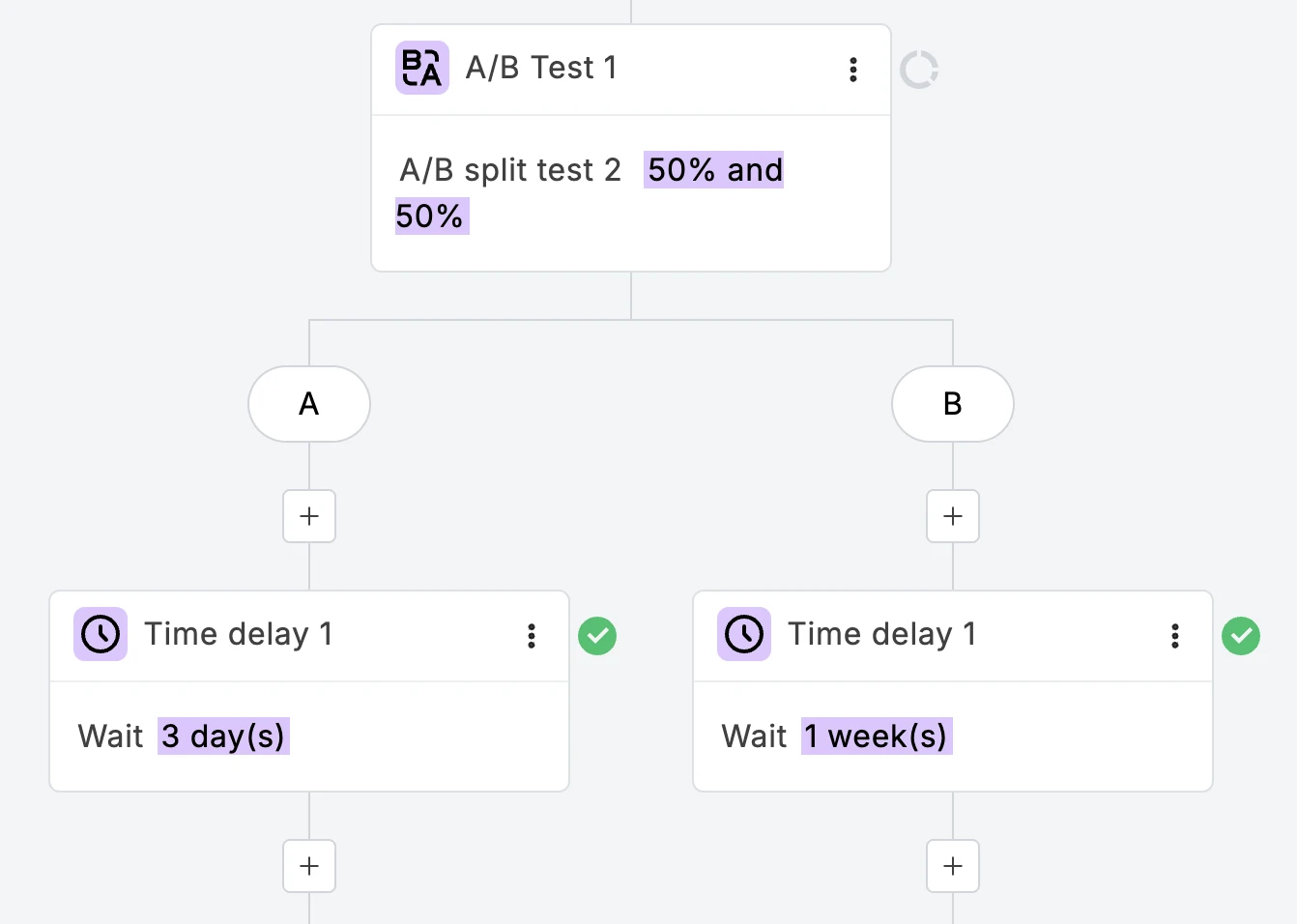

A/B testing isn’t just reserved for email campaigns. If you use MailerLite, you can easily add A/B split testing steps to your automation workflows. That means you can test emails, delays and action steps to find out if your workflows are working effectively.

In the example above, we were able to test if shorter or longer delays were more effective in automated sequences.

In the following example, we took it a step further and used the delay split test right at the beginning of the workflow to find out the optimal email send time.

How to A/B test automation steps in MailerLite

With A/B testing for automation, you can test up to 3 versions of Delay and Email steps. There are many ways you can use the A/B split test step. For example, you can:

Test different automation email subject lines: Find out which version yields the most opens/clicks

Test different sender names: See if subscribers resonate more with a person or a brand

Test emails with different content: Find out what kind of newsletter engages more subscribers

Test different delay times: Find the appropriate time to send follow-up emails that get more conversions

To add a split test to your automation workflow:

1. Open your automation in the workflow editor.

2. Click the + icon and select A/B testing.

3. In the sidebar, give your split test a name and use the slider to select the percentage of which you’d like to distribute traffic.

4. If needed, add a third variable by clicking + Add path C.

5. Click each + icon to add your variables. You can choose to test Delay steps, Email steps, or a combination of both.

6. Once you’re happy with your split test, click the single + icon at the bottom to continue building your workflow.

There are so many more things you can experiment with in your email marketing strategy, including:

Personalization: Does including the subscriber’s name in the subject line increase opens?

Call-to-action button (CTA): Which button color or text gets the most clicks?

Email design: Which colors in newsletters get the most engagement?

Preheader text: Which copy will best complement the subject line?

Layout: Should I position blocks side by side or above each other?

Email text: Which tone in my email content drives the most engagement?

Email length: Is it better to have 3 or 4 sections in my newsletter?

Type of promotion: Free delivery or lowered pricing?

Testimonials: Does the social proof from Customer A or Customer B increase conversions?

Sender info: Should I use my first name or my company name to increase engagement?

3 A/B testing case studies for landing pages, ads and pop-ups

You can test other types of marketing assets, beyond just email, to optimize conversions. Like landing pages, ads and pop-ups. Here are 3 examples to get you started.

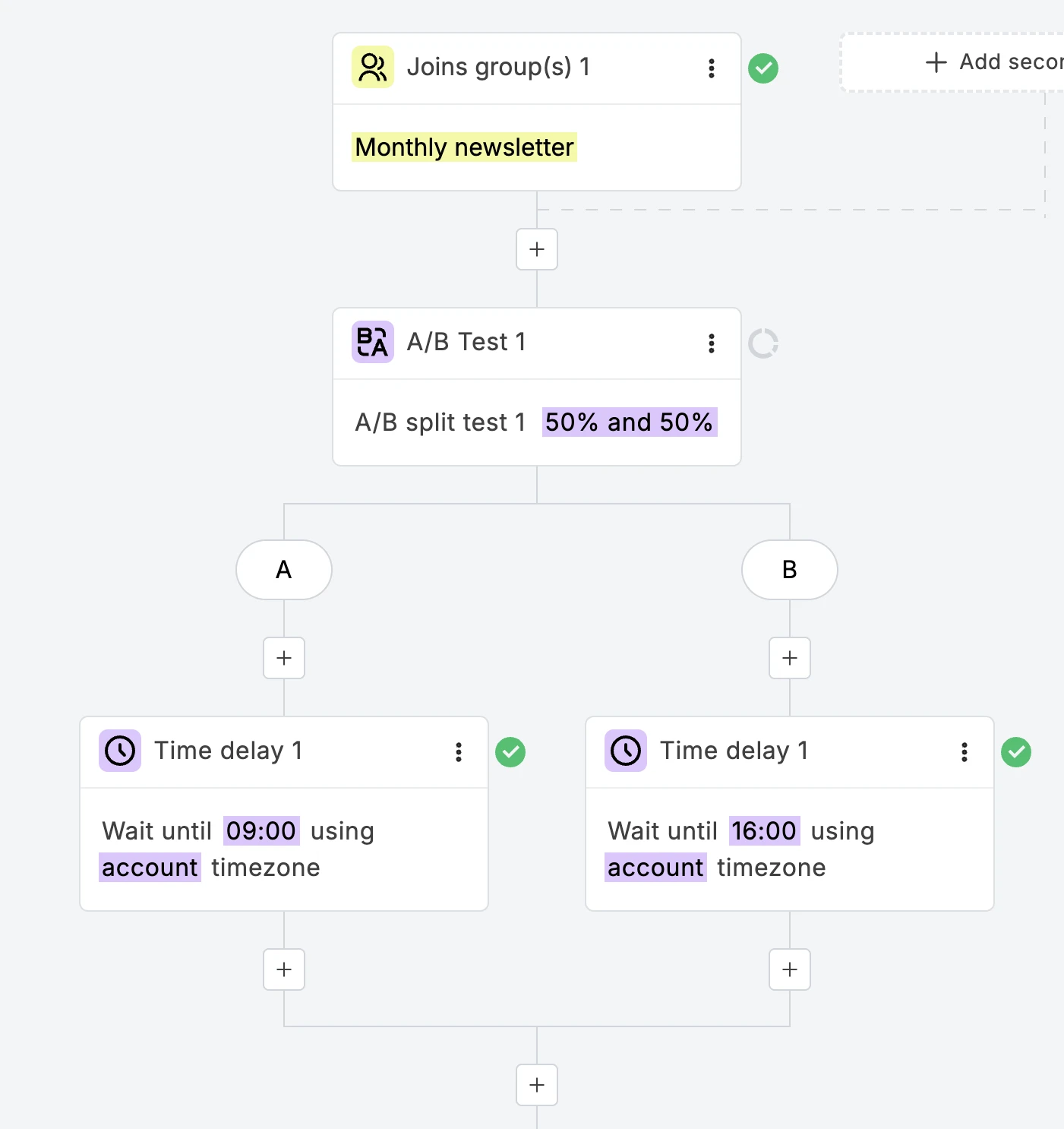

1. Long vs. short landing pages (data36)

Tomi Mester from data36 decided to improve a landing page for his online course using A/B testing. He compared the original version with a longer landing page that answered common FAQs, had more information about the course, and included four embedded videos.

Despite being much longer, Tomi found that the new version had double the conversion rate, with 99%+ statistical significance! It seems that people who wanted to buy were ready to go the extra mile and read all the relevant information before going to checkout. If you're interested to learn more, you can read the full case study.

In A/B testing, statistical significance measures how likely it is that the difference between the two versions wasn’t due to chance, or a mistake. The higher the statistical significance, the more sure you can be that the differences are real. You can use an online calculator, such as this one from Neil Patel, to calculate your own.

2. Personalized banner (Sony)

Wanting to drive more conversions for their banner ads, retailer Sony decided to use more personal language and redesign with the copy: “Create your own VAIO laptop”.

They compared it with a more promotional ad (below) to see which one would get the highest click-through rates and adds to the shopping cart.

The more personal call to action led to a 6% increase in clicks, and a 21.3% increase in adds to the shopping cart. If you are planning to test banner ads, you can learn more and go deeper by reading the full case study.

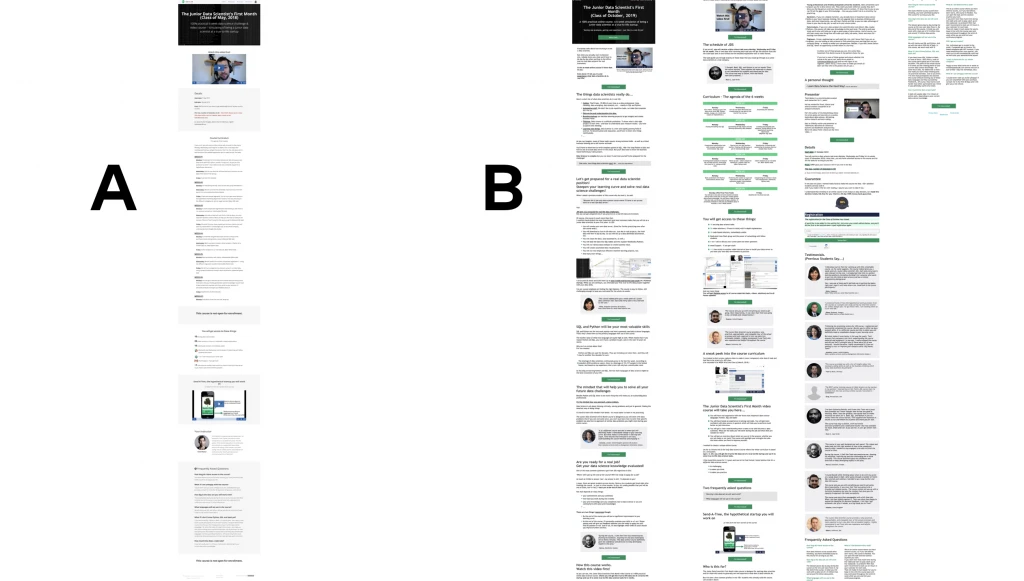

3. Exit-intent pop-ups (Crossrope)

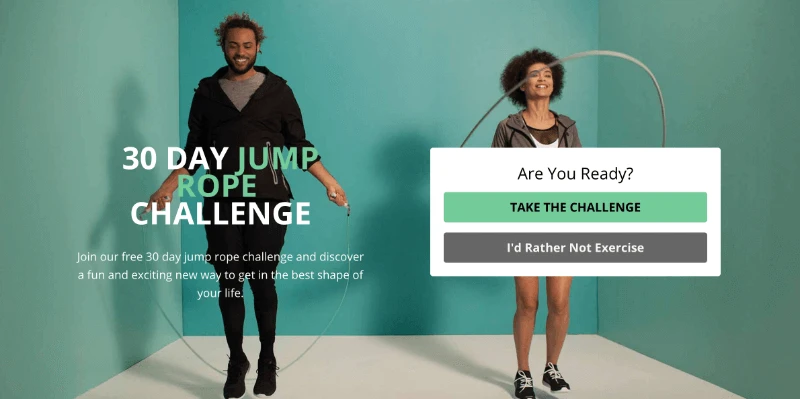

Fitness e-commerce brand Crossrope wanted to gather email list signups before their website visitors left. They first created a pop-up (below) that appeared when people moved toward the browser bar.

With this pop-up, they were able to convert 7.65% of people who were leaving. They decided to take this idea further with a fullscreen pop-up on their blog.

With this new version, they were able to convert 13.71% of website visitors who came to their blog! This suggests when done right, a larger pop-up could catch attention and increase conversions. If you're planning to test pop-ups, read the case study to learn more.

4 A/B testing success stories from MailerLite customers

Salty found what content resonates best with its audience

Claire Fitzsimmons, founder of the media company Salty, uses A/B testing to get an insight into the kind of content that resonates with her audience.

The A/B testing capabilities have been great. We can see exactly what kind of messaging resonates with our audience and what doesn't, which helps us refine our strategy and make our communication more effective.

Read Salty’s case study here:

KC Tool increased its newsletter click rates by 1%

When German hand tool distributor KC Tool was looking to switch to a new email marketing platform, MailerLite caught its attention. And one of the reasons for that was the A/B testing capabilities.

KC Tool regularly uses MailerLite’s A/B testing to optimize its weekly newsletter, which shares critical updates like new tool releases and sales.

We like to test everything. We test subject lines, the sender name, sometimes I even take 2 different product photos and test which one works best

These continuous tests provide valuable insights into the KC Tool audience's preferences. One key finding was the audience's preference for concise communication.

Over time, we saw that people like their emails to be straight to the point, so we started cutting back text and focusing on a specific topic. This raised our click rates roughly 1% which equates to hundreds of people clicking who might not have done so.

Read KC Tool’s case study here:

Privyr found email topics that generated the highest engagement

SaaS company Privyr A/B tests automation workflows to optimize its campaigns. Its testing included finding the best welcome email messaging to persuade users to book a demo, as well as optimizing subject lines and email content to resonate better with subscribers. This led to the discovery of a highly engaging new content topic and a major boost in engagement.

Previously, our newsletter was about sales strategy and best practices. But we began to test sharing actual scripts, and we realized our audience interacted with the links a lot.

Read Privyr’s case study here:

The Remote Company’s email open rates increased by 10%

Nicole Lee of The Remote Company leveraged A/B testing to significantly increase the visibility and impact of her email newsletters.

A newsletter’s success depends on getting its subscribers to open and read the message. Using MailerLite’s subject line A/B testing feature, Nicole successfully achieved this goal. By testing different subject line versions, she was able to boost open rates by an average of 10% on tested campaigns compared to those that ran without testing.

Read The Remote Company's case study here:

Disclosure: MailerLite was part of The Remote Company until it was acquired in 2022.

Understanding the A/B test results

For email, you can refer to our industry benchmarks to find out what the standard open and click rates are for your niche. These can serve as a baseline for interpreting your results.

For landing pages, you can calculate the statistical significance by comparing the number of page visitors and conversion rate between versions, to see how relevant the variation is.

Start your own A/B testing campaign now

You should now have all the tools and information you need to start testing! As you get started, remember to…

Test one thing at a time so that you can clearly see how each element impacts the results

Select a winner by click rates rather than open rates for your emails, especially if you’re comparing the content

Consistently test and adjust to keep up with changing tastes and preferences

By keeping an eye on the data, you’ll be able to make the right decisions to enhance user experience, reduce bounce rates and most importantly, drive conversions!